Eval suites that catch drift before customers do: the 3-layer harness

A walkthrough of the 3-layer eval harness we ship with every production agent — prompt unit tests, property tests on outputs, and nightly drift detection on production traffic — with cost per layer, the specific failure modes each catches, and the decision to use LLM-as-judge only where it earns its keep.

When a customer reports that their agent "feels worse today," it's usually too late. The drift happened last week, compounded over the weekend, and the first person to notice was someone with a complaint, not an alert. That's the failure mode this post is about, and the three-layer harness below is how we stop it.

We're Autoolize, an AI automation studio and a member of the Claude Partner Network. Across 40 production AI agents shipped for ops teams between 2024 and 2026 — invoice OCR, inbound triage, vendor reconciliation, research agents, and a fair amount of HITL — every single one ships to production with the same three-layer eval harness described here. It's the CI for agents. It's the thing that catches regressions and drift hours before any user sees them, and it's the single most load-bearing engineering investment we make on every engagement.

Per the MIT NANDA GenAI Divide: State of AI in Business 2025 report, roughly 95% of enterprise generative AI pilots fail to produce measurable P&L impact4. In our field experience, the single biggest predictor of which projects cross into the 5% is whether the eval harness existed on day one — not which model, framework, or tool stack got picked. This post is the field guide to building the harness we use, layer by layer.

If you're comparison-shopping an eval stack, skim §1 for the three layers at a glance and §7 for the cost math. If you're mid-engagement and want help wiring evals into an agent already in production, book a strategy call — we'll map your workflow to the three layers in 20 minutes.

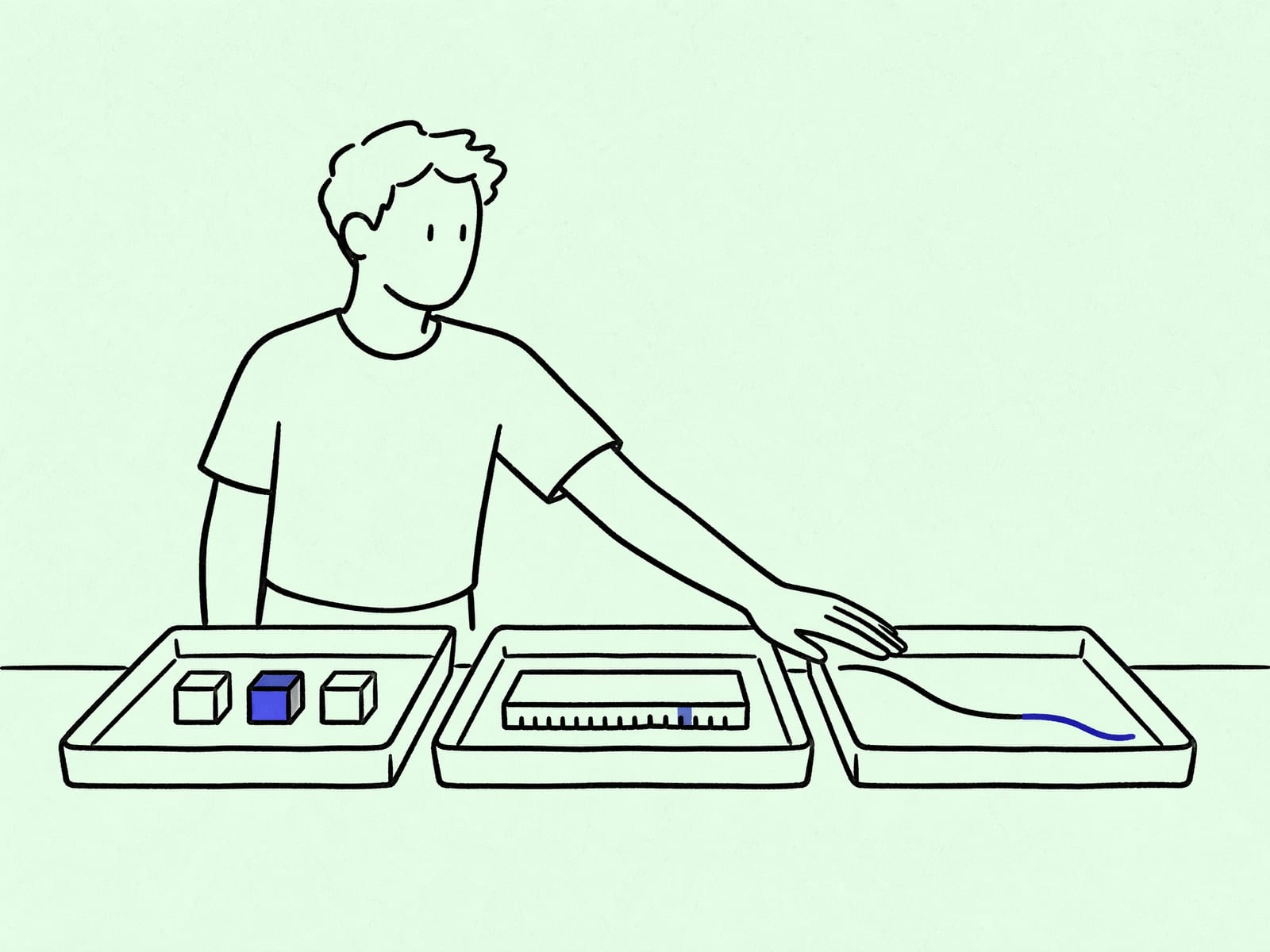

Quick overview — 3 layers at a glance

Three layers, each cheap, each catching a different class of failure. Ship all three together — skipping any one of them leaves a specific gap the other two cannot close.

| # | Layer | What it catches | Cost/month | Run cadence |

|---|---|---|---|---|

| 1 | Prompt unit tests | Known regressions on locked fixtures | ~$1–$5 | Every PR (CI gate) |

| 2 | Property tests on outputs | Unknown regressions + schema drift | ~$5–$20 | Every PR + sampled production traffic (hourly) |

| 3 | Golden-trace drift detection | Data drift + slow quality decay | ~$10–$40 | Nightly against production traffic |

The sorting rule. Build Layer 1 on the day you write the first prompt. Build Layer 2 before the agent sees production traffic. Build Layer 3 inside the first month of production. Any ordering that delays Layer 1 ("we'll add evals later") usually means six weeks later the team is reading Zap-history logs trying to explain a silent regression. We've seen that pattern enough times to treat Layer 1 as a non-negotiable part of the first week's scope.

Why LLM evals are not model benchmarks

A quick terminology reset, because this is where half the eval confusion in ops teams lives. Model benchmarks (MMLU, HumanEval, GPQA, SWE-bench) measure raw model capability on standardized tasks. They're published by model vendors and academic labs; they're useful when picking a model family. But they are not what your production agent needs.

An LLM eval is a test against your workflow — your data, your prompts, your tools, your expected outputs. The eval harness in this post is entirely about LLM evals, not model benchmarks. A model that tops the MMLU leaderboard can still regress catastrophically on your invoice-extraction task when its fine-tuning window shifts, and no benchmark table is going to surface that for you. Your golden trace on your invoice corpus will.

Anthropic's own engineering guidance on this is clear: the grader types in order of preference are deterministic first, LLM-judge second, human-judge last2. OpenAI Evals takes the same shape — the framework is designed so your eval is a function from (input, model_output) to a score, and the function can be any Python code, not just an LLM call1. The reliability limits of LLM-as-judge are now well-documented in the academic literature3, which is why we lean hard on deterministic assertions for the first two layers and save LLM-judge for the third.

Three implications for how we build the harness:

- Evals are owned by the team that owns the agent. Benchmarks are published by model vendors; evals are written by you against your data. Outsourcing eval design is nearly always wrong.

- Evals are part of the product. The golden traces, property assertions, and drift thresholds live in the same repo as the agent code. They ship together; they version together.

- Evals are the success criterion. A golden trace is a written-down statement of "this is what correct looks like." Agents without golden traces are running on opinion.

Layer 1 — prompt unit tests (cheap, gate CI)

What it catches

Known regressions. The agent returns refund today on "I want my money back"; after a prompt edit, it returns REFUND or complaint instead. Layer 1 catches that inside 2 minutes, before the PR merges.

This is the layer that catches 80% of the bugs we've caught across two years of running this, at roughly 2% of the total eval cost. Teams that skip Layer 1 chasing "fancy eval frameworks" are spending 5x to solve 20% of the problem.

Test shape

A small set of frozen input/output pairs per prompt. Each pair is a tuple of (input, required output property). Examples:

- The refund-intent classifier must return exactly

refundon "I want my money back." - The invoice extractor must pull

total: 42.00from the canonical invoice fixture. - The support-triage agent must return priority

p1for any input containing the literal phrase "production down."

The assertions are deterministic. No LLM-judge in Layer 1 — if you can't write a deterministic check for your required property, the property is probably not locked enough to test yet.

How we run it

Every pull request. A change to a prompt that breaks any Layer 1 fixture fails CI and the PR can't merge. We use pytest with a pytest-asyncio plugin and a thin wrapper that calls the model once per fixture; the whole suite runs in under 90 seconds for agents with ~50 fixtures.

Fixtures live in evals/layer1/<agent_name>/*.json, one file per scenario. The file stores (input, expected-property, reason-it-matters). The "reason-it-matters" comment is the thing most teams skip and regret later — a year in, nobody remembers why a fixture exists, and someone deletes it during a clean-up.

What it costs

Per run: under $1 for most agents. Monthly: $1–$5 per agent depending on PR frequency. This is the cheapest defense you can build and it buys the most protection. Every engagement we've ever shipped has Layer 1 live by end of week 1.

Layer 2 — property tests on outputs

What it catches

Unknown regressions and schema drift. Prompt unit tests catch the regressions you anticipated; property tests catch the ones you didn't. In two years of running this we've caught silent schema drift from model version upgrades three separate times, all within the first hour of the upgrade hitting production.

Test shape

For every agent output, a list of structural properties that must always hold regardless of input. The properties are closer to invariants than to expectations:

- A classification output must be one of the enum values. Never a free-form string.

- A drafted email must be under the token limit and must not contain a literal

{{placeholder}}the template forgot to fill. - A scored lead must return a number in

[0, 1]. - An extracted invoice total must be a positive decimal, not a currency-formatted string.

- A tool-call argument must conform to the tool's JSON schema.

These are the rules of the game, not the expected outputs. They hold for inputs we've never seen, which is what makes them catch unknown regressions.

The word "property" here is borrowed from property-based testing in the software world — Anthropic published a nice writeup on using Claude itself to write property-based tests, which is worth reading for the meta-application, though in this post we mean human-written property assertions2.

How we run it

Two surfaces.

CI on every PR against the Layer 1 fixtures. This catches property violations in the fixture inputs themselves before merge.

Sampled production traffic, usually 50 samples per agent per hour, logged to a Postgres table. A property violation is a page — literal page, PagerDuty or equivalent. The sample rate is tuned per-agent: higher-volume agents can sample more; lower-volume agents run all of production through the property tests because the per-run cost is trivial.

What it costs

$5–$20/month per agent at 50 samples/hour. Scales linearly with the sample rate. This layer pays back the fastest: in three separate incidents we caught a model-version regression within the first hour, at a cost that would otherwise have been days of downstream data corruption.

Layer 3 — golden-trace drift detection

What it catches

Slow quality decay. Data drift. The model is still returning valid JSON, still hitting property assertions, still passing Layer 1 — but the outputs are subtly worse than they were six weeks ago, because the inputs have shifted. Layer 3 is the layer that catches "feels worse" before a customer can.

Test shape

A curated set of "golden traces" — real production inputs with outputs we've manually reviewed and locked. Each golden trace is a tuple of (input, locked-output, date-locked, domain-expert-reviewer). The critical piece is the locked output: this is the human-reviewed correct answer, not a heuristic.

For classification and extraction agents the golden-output is exact-match comparable. For open-ended outputs (drafted emails, research summaries, multi-step responses) we score semantic equivalence — the string-match approach doesn't work because LLMs paraphrase, and paraphrasing a good answer should still pass.

How we run it

Nightly. Every agent re-runs against its golden-trace set; the outputs are scored against the locked answers using a combination of deterministic matching (where possible) and LLM-as-judge (where paraphrase makes deterministic matching wrong). The judge is always a smaller, cheaper model than the agent itself (we usually use Claude Haiku 4.5 to judge Claude Sonnet 4.6 outputs) to keep the cost contained and to reduce the risk of judge-model drift tracking agent-model drift.

We track the equivalence rate as a time series. The pattern we watch for is gradual decay: 94% → 91% → 87% over three weeks. That used to generate a customer complaint in week four. Now it generates a Slack alert in week one.

The academic literature on LLM-as-judge reliability is worth reading before relying on it as your sole grading layer3. Our working mitigations: always use a different model as judge than the agent being graded, always cross-check the judge against a small human-reviewed sample monthly, and never use the judge for the first two layers.

What it costs

$10–$40/month per agent, scaling with golden-trace count and judge model. For a typical agent with 50 golden traces running nightly, the model cost is $10–$15/month. The bigger cost is engineering time: re-curating the golden traces quarterly takes roughly an engineer-day per agent.

What ships in practice — 3 real examples

Three quick cases to make the layers concrete.

Example 1 — invoice OCR. Layer 1: 40 golden invoices with locked total, vendor, date fields. Layer 2: output JSON validates against schema; total is a positive decimal; vendor is a non-empty string. Layer 3: 200 anonymized production invoices per night, with LLM-judge on a field-by-field basis (is the extracted vendor name semantically the same as the locked one, handling abbreviations and punctuation). Caught a 30% accuracy drop on a specific vendor template when the model version shifted; root cause was a tokenizer change that affected a specific whitespace pattern in that vendor's header. The Layer 3 alert fired the morning after the model rolled out; the fix was a one-line prompt tweak.

Example 2 — inbound triage classifier. Layer 1: 60 golden tickets with locked category + priority. Layer 2: category is one of the 12 enum values; priority is p1/p2/p3/p4. Layer 3: 100 production tickets per night, scored against the locked category with deterministic exact-match (no LLM-judge — the output is a label, not prose). Caught a silent schema regression where the model started returning p-1 instead of p1 after a provider-side update, which would have broken the downstream routing system. Layer 2 property test fired within 40 minutes of the first bad output.

Example 3 — research agent. Layer 1: 20 golden questions with locked answers and locked source documents. Layer 2: answer contains at least one citation; no citation points to a non-existent source; the answer length is within bounds. Layer 3: 30 production questions per night, scored on faithfulness (every claim in the answer must be supported by a retrieved source) via LLM-judge, and on answer-quality via a rubric-based score. Caught a retrieval-layer regression where a reranker update started demoting the best source documents; the faithfulness score on the golden set dropped from 96% to 87% over a week before an alert fired.

Cost and cadence — how we run this

Fleet-level numbers across our 40 production agents.

Per-agent monthly eval cost: $20–$80 total across all three layers. Layer 1 negligible, Layer 2 $5–$20, Layer 3 $10–$40. Scales with golden-trace count and judge model choice, not with traffic volume (we sample, not test every input).

Per-agent engineering cost:

- Bootstrap (Layers 1+2): 3–5 engineer-days per agent.

- Golden-trace curation (Layer 3): 1–2 engineer-days per agent, followed by 1 engineer-day/quarter for re-curation.

- Ongoing maintenance: 1–3 hours/week per agent, mostly reviewing the nightly alerts and adjusting thresholds.

Fleet-level platform cost:

- 50k eval runs/day across the fleet at an average $0.002/run = $100/day ≈ $3k/month in model fees.

- Eval result storage (Postgres, ~6 months retention) + dashboarding ≈ $400/month.

- Alerting infra (PagerDuty + Slack integrations) ≈ $200/month.

- Human time reviewing: roughly 2 engineer-hours/day across all 40 agents.

Run cadence.

- Layer 1: every PR (~5–15 PRs/day fleet-wide).

- Layer 2: every PR + hourly 50-sample sweep on production traffic.

- Layer 3: nightly, windowed to off-hours to keep cost predictable.

The rule we use for sizing: eval spend should sit at 3–10% of agent-run spend. Below 3%, the harness is probably too thin to catch slow drift. Above 10%, you're either over-sampling or running LLM-judge in places deterministic assertions would do.

Lessons from 2+ years of running it

Most drift is not model drift. It's data drift — the inputs shifted, and the agent's implicit assumptions broke. The eval harness surfaces this faster than any model-versioning strategy, because Layer 3's golden traces are your snapshot of "what inputs used to look like" and the production traffic it scores against is "what inputs look like now." The gap between them is the data drift.

The cheapest layer catches the most bugs. Layer 1 is 80% of the value. Don't skip it chasing fancy eval frameworks. A team that has Layer 1 live and nothing else will catch more regressions than a team with a sophisticated Layer 3 dashboard and no Layer 1 on PRs.

Golden traces decay. The "truth" labels we locked a year ago are sometimes wrong now, because the business changed. We budget an engineer-day/quarter to re-curate them. Teams that skip this step discover after a year that their eval dashboard is green while actual agent quality has drifted — the dashboard is now measuring the wrong thing.

Judge drift is real. When the judge model updates, the Layer 3 equivalence rate can shift without any change in the agent. We mitigate by using a smaller, slower-moving judge model (Haiku tier), cross-checking a 5% sample against human review monthly, and treating sudden step-changes in the equivalence rate as "investigate the judge first" rather than "investigate the agent first."

Evals are how you survive model updates. Claude Opus 4.7's April 2026 tokenizer change and GPT-5.4's sandbox behavior update both would have caused a scramble without a harness in place. With one, each was a Tuesday: the nightly Layer 3 runs flagged the agents affected, the Layer 1 fixtures were re-run against the new model, and the agents that still passed kept running while the ones that regressed got a one-line prompt tweak.

The ownership model matters more than the framework choice. OpenAI Evals1, DeepEval, promptfoo, and LangSmith are all fine choices for the framework layer. The part that determines whether the harness actually catches anything is whether a named engineer is on call for the alerts it produces. A dashboard nobody watches is decoration.

Further reading

- Agentic AI for ops teams: 6 patterns we ship — the pattern library whose eval hooks §3–§8 each reference back to this harness.

- Claude Agent SDK production playbook — the runtime patterns the harness wraps; Pattern 5 walks through wiring it specifically into the SDK.

- OpenAI Agent Builder vs Claude Agent SDK — how the framework choice affects where Layer 2 and Layer 3 have to live.

- When Zapier stops being the answer — the observability side (Signal 4) of what lives alongside an eval harness in a production pipeline.

Sources

- OpenAI. Evals — framework for evaluating LLMs and LLM systems. github.com/openai/evals

- Anthropic Engineering. Demystifying evals for AI agents. anthropic.com/engineering/demystifying-evals-for-ai-agents

- Li et al. A Survey on LLM-as-a-Judge. arXiv:2411.15594 (updated Oct 2025). arxiv.org/abs/2411.15594

- The GenAI Divide: State of AI in Business 2025. MIT Media Lab, NANDA initiative. media.mit.edu/groups/nanda

Originally published April 8, 2026. Refreshed April 23, 2026 with expanded layer walkthroughs, real production examples, 4 verified citations, and a 10-question FAQ. If you want help wiring this harness into an agent already in production, book a strategy call — 30 minutes, no proposal push.

Frequently asked questions

What is an LLM eval harness?

An LLM eval harness is the set of automated tests that decide whether a change to a production LLM agent is safe to ship. It's the CI for agents — the thing that turns "the model feels different today" into a numeric regression on a dashboard before any user notices. A good harness has at least three layers (unit tests on prompts, property tests on outputs, nightly drift detection on production traffic), each catching a different failure mode.

Why do LLM evals matter more in 2026 than in 2024?

Because the models are moving faster. Claude Opus 4.7 dropped a new tokenizer in April 2026 that made the same input up to 35% more expensive; GPT-5.4's April 2026 update changed default sandbox behavior for tool-use. Agents that pinned to "claude-opus" or "gpt-5" as a moving alias silently shifted cost and behavior that week. Without an eval harness the only way teams noticed was customer complaints. With one, the regression surfaced in the nightly Slack alert on April 16.

How many eval cases do I need to start?

Start with 20 golden traces — a few dozen input/output pairs per agent where the output is a locked, hand-reviewed baseline. That's enough to catch roughly 80% of the regressions we've caught in production over two years. Growing the set to 200–500 over the first six months raises the catch rate to ~95%. Trying to ship with "hundreds of evals on day one" usually means a team builds brittle tests against outputs that aren't actually locked, which then break on every legitimate prompt change.

When should I use LLM-as-judge vs deterministic assertions?

Deterministic first, LLM-judge only where it earns its keep. Anthropic's own guidance says the same: use deterministic graders where possible, LLM graders where necessary, and human graders judiciously2. Classification outputs, field extractions, and numeric scores belong in deterministic assertions — cheaper, faster, and no judge-model drift risk. LLM-judge belongs on open-ended outputs (drafted emails, summaries, multi-step research answers) where paraphrase is legitimate and string match fails. The recent academic survey of LLM-as-judge systems flags reliability limits that are worth reading before betting a critical path on one3.

How much does a 3-layer eval harness cost to run?

Across our 40 production agents, the eval harness adds roughly $20–$80/month per agent on top of the agent's own run cost — Layer 1 is essentially free (~$1/run), Layer 2 adds $5–$20/month at 50 samples/hour sampling rate, Layer 3 adds $10–$40/month depending on golden-trace count and LLM-judge cost. The engineering cost dominates the model cost: plan for 3–5 engineering days to bootstrap Layers 1+2 per agent, and an engineer-day/quarter to re-curate the golden traces as the business shifts.

How do I handle golden-trace decay?

Budget for it explicitly. After a year, 10–20% of our golden-trace "correct" outputs are either outdated (business rules changed) or simply wrong (we were generous when we locked them). We re-review the whole golden set once a quarter, drop the ones no longer relevant, and re-lock any that drifted. It's engineer-day-quarter work per agent. Teams that skip this step discover over 12 months that their eval dashboards are green while actual agent quality has shifted — the dashboard is now measuring the wrong thing.

What are the common failure modes an eval harness catches in production?

Four common ones. First, silent schema drift on a model version upgrade — the agent starts returning malformed JSON because the new model fine-tuning changed default formatting. Second, prompt regression from an intentional edit that broke an edge case nobody tested for. Third, tool-use misfires — the agent starts passing wrong argument types because the tool description drifted. Fourth, faithfulness regression on research outputs — the agent starts confabulating details when the retrieval layer subtly changes. All four are caught by the three layers at different depths.

Can I use OpenAI Evals or DeepEval instead of building custom?

Yes, as a starting point. OpenAI Evals1 is the best-known open-source framework (~17k stars as of early 2026) and ships a registry of benchmark evals you can adapt. DeepEval, promptfoo, and LangSmith cover the same ground with different ergonomics. All three work fine for Layers 1 and 2. Layer 3 (drift detection on production traffic) is usually custom because it has to integrate with your observability stack, your golden-trace store, and your alerting — frameworks can help with the scoring function but the plumbing is yours.

Should I run evals on every pull request or just nightly?

Layer 1 on every PR (gate CI; a few dollars per run; fast feedback). Layer 2 on every PR for the affected agents (cheap; catches most regressions). Layer 3 nightly against production traffic samples (the one that catches drift rather than regression). Running Layer 3 on every PR is expensive and doesn't add much signal — drift is a time-series phenomenon, not a single-commit phenomenon.

What does the MIT "95% of AI pilots fail" finding say about evals?

The MIT NANDA GenAI Divide: State of AI in Business 2025 report attributes most enterprise AI failures to missing success criteria and no owner past launch4 — both of which an eval harness directly addresses. A golden trace is the success criterion, written down and testable. An on-call owner watching the eval dashboard is the post-launch owner the failing pilots lacked. In our field experience, the single biggest predictor of a shipped agent that pays back is whether the eval harness was designed on day one, not bolted on after a regression.

Sources

- OpenAI Evals — framework and open-source benchmark registry · OpenAI

- Demystifying evals for AI agents · Anthropic Engineering

- A Survey on LLM-as-a-Judge · Li et al. (updated Oct 2025)

- The GenAI Divide: State of AI in Business 2025 · MIT Media Lab, NANDA initiative